There is always some need for heat treatment in my workshop, for example, hardening, softening, tempering of steel, hardening of aluminum alloys, etc., often just done with a torch and by visual judgement and feeling more than measurement. While this works for small parts and general tool steel quite well, it doesn’t work for hardening of aluminum (precipitation hardening), and larger pieces of steel may crack. Even smaller pieces may suffer from uneven heating, resulting in distortion.

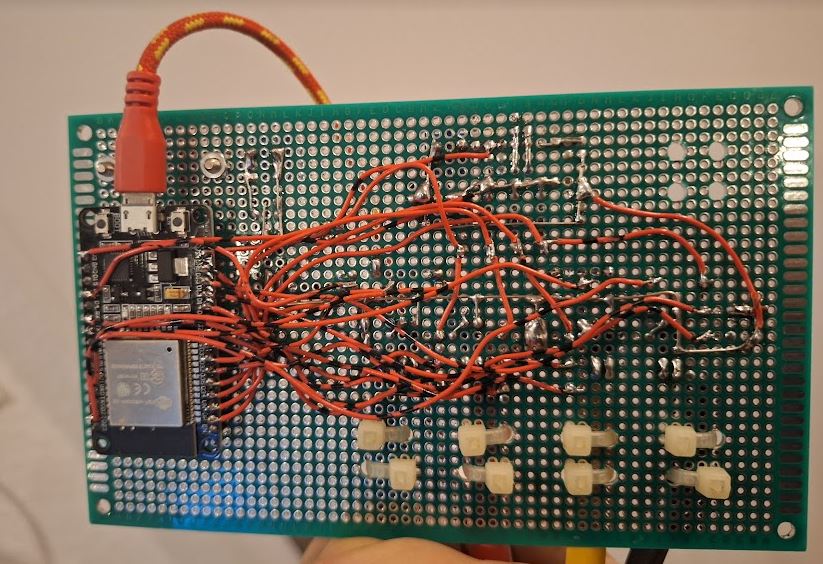

A small electric oven is handy for that so far, a German brand, “Naber”, already pretty dated but it seems to have been very rarely used. Not long ago, added a controller, to allow curves and automatic controlled heating and cooling operation.

But recently, screening to classifieds, I found a much better oven, 4-side heated, Heraeus K1150/3 that can handle much larger pieces for heat treatment.

It came pretty quickly, for just a little over 400 EUR, including delivery and including a cart. First thing I did, painted the cart a little with red paint – RAL3000 “feuerrot” as it is called officially. For years I carried around a can of such red paint, never thought I would ever actually need it.

The oven, it can operate of 3 phase power, 380 V 14.5 Amperes, originally. Now with a mains voltage of 400 V, the power will be about 10% more. In any case, I can connect it to my 16 A outlets.

The oven has quite significant heating power for its size, good for heating up metals quickly, for hardening. Also, it is built such that it can be opened pretty safety in hot conditions, to take out the glowing parts – not all ovens (especially not common pottery ovens) can be opened when hot – the refractory bricks may brake, or the coils may bend, or similar.

The next difficult task was to get the oven back onto the cart, not easy, because of its bulky size and well over 100 kgs of weight. Even with 3 people, impossibly to carry, and not easy to grab. But with various pieces of wood, some small furniture rollers, eventually managed to get it onto the cart with the help of a friend, and no damage or injury!

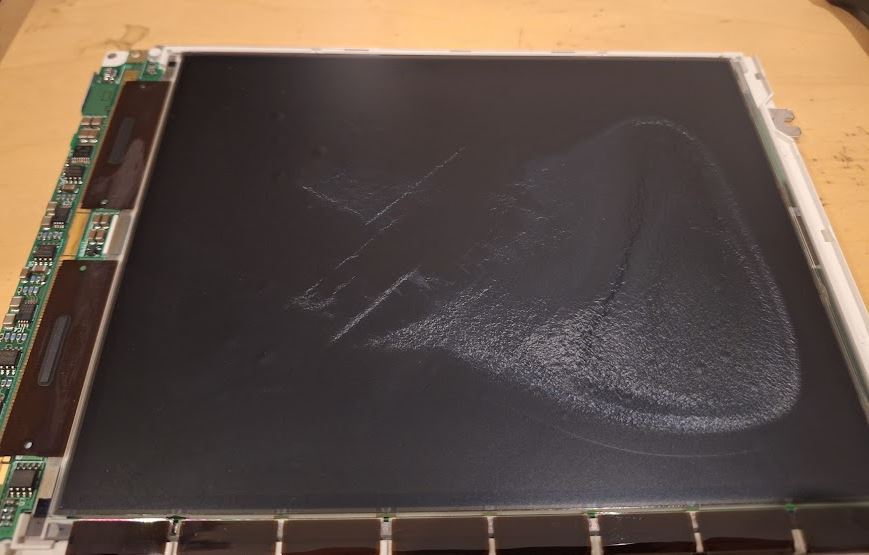

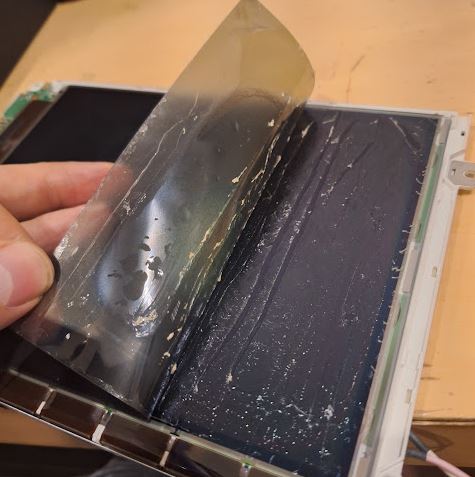

The bricks of the oven are all in good state, except for some loose parts at the front door. There, the inner (hot) layer is held to the door by 4 metal parts.

With refractory glue, stable to well up to 1100°C, and easy to use.

To do a proper job, I cleaned up all the old cement, and thoroughly roughened the mating surfaces.

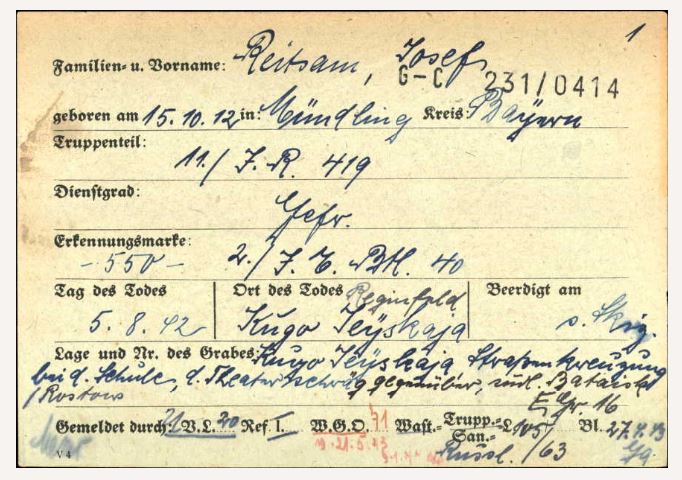

By the manufacturer, the oven has a very sturdy Pallaplat (Au-Pd-Pt vs. Pt-Rh) thermocouple, very thick wire, certainly worth almost the 400 EUR I paid for the whole oven, connected to an analog temperature regulator and a nice 96×96 mm instrument.

These Pallaplat thermocouples have a larger coefficient (several times larger) compared to common Type S (Pt-PtRh) thermocouples.

My intention was to keep the old regulator and instrument as a maximum temperature regulator basically, and add a (secondary) controller with ramp/segment control. The oven, fortunately, has already provisions for a second thermocouple, so I pulled two new isolation tubes and a new S-type compensation wire from the oven to the control cabinet.

Be careful when connecting compensation wires for thermocouples, because the color codes are misleading: BLACK is positive, RED is negative!

To make things even more complicated, there are colors codes that differ from country to country…

To install the new temperature controller, we need to modify the control cabinet a little. There is a timer so far, which will not be needed anymore, but the opening is not quite large enough.

Cutting a a little larger with a grinding disc, filling, quite laborious to do it precisely!

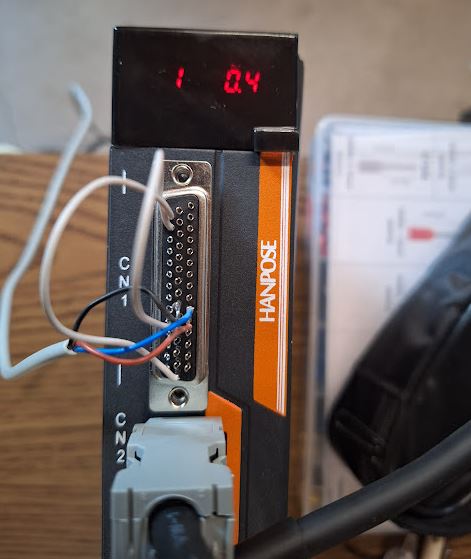

It is a nice part, with many functions and a complicated manual, but it works pretty well.

Rather than an expensive Western part, I resorted to a part imported from China, model PMA-900. It is available in various option, alarms, control output choices and so on.

Heraeus used a Siemens contactor to switch the heater on and off, but for finer control, I selected a 40 Amp solid state relais, which can modulate the power much more precisely, and without wear.

Installed – the heat sink came with the relais, and there is a small fan to keep it cool even in the control cabinet. Later, I added a plastic cover for touch protection, and some warning signs. 400 Volts is no joke!

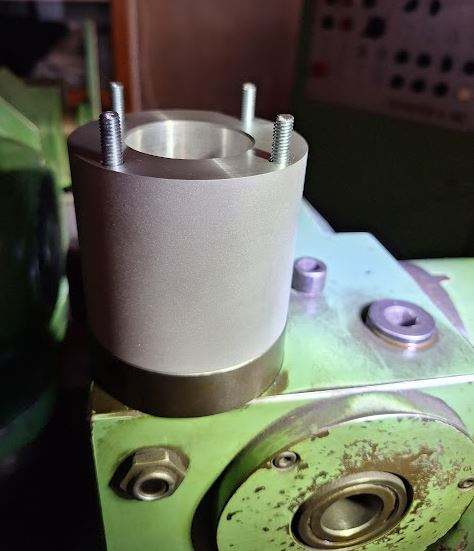

The S-type thermocouple, about 300 mm long, I also got from China, at a very reasonable price. It is already protected by a ceramic tube, but the diameter didn’t fit the existing hole, and it looked all too fragile to be easily damaged with rough handling and over time.

Fortunately, I found a surplus protection tube, exactly the right diameter (inner and outer), made of 99+% sintered aluminum oxide. Just, a little long.

With a diamond wheel I cut it slowly, because it is a single piece not easy to get again (new protection tubes of this kind nearly cost 250 EUR in Germany, therefore, surplus discounted parts are the only reasonably choice).

Inside the oven, you can easily see the custom Heraeus brickwork (also used for smaller models of that oven), 4-side heating, and the bottom is normally covered with silicon carbide tiles.

Finally, a little brush-up of the outside, to protect it from rush, by using a high-temperature paint. I prefer the MIPA brand, silver paint. A small 375 ml can will go a long way. It is resistant up to 800°C, and from my experience, stops most rush and can be re-applied from time to time if needed without building thick layers.

It is a real paint, with a good small, any many solvents, not the water-based junk for wood. Real paint!

Now, will all repairs done, the oven is looking good again. Everything cleaned up and with a new controller. Great addition to the workshop (only trouble is, it is very heavy, and does consume a lot of space).

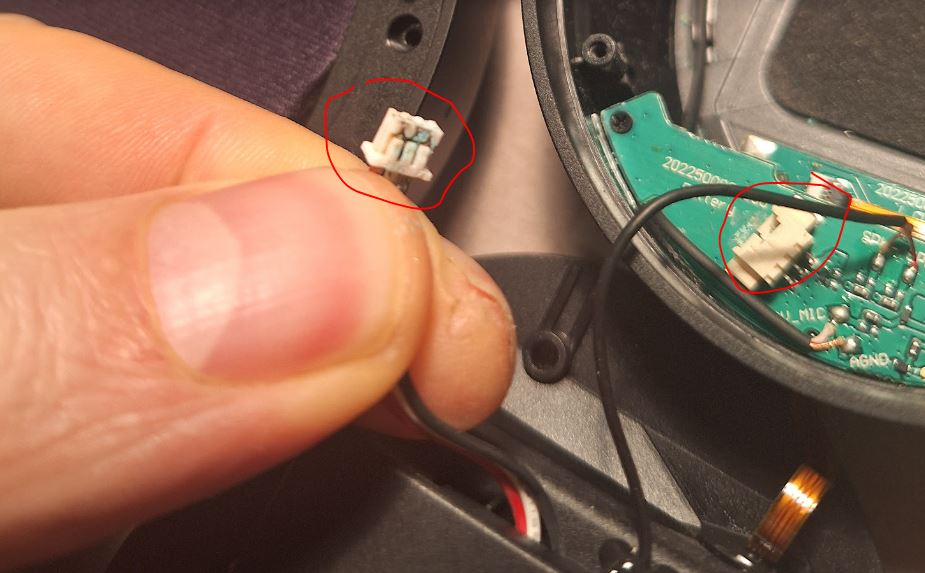

One final note – the power cables of machines, especially, industrial machines purchased used from unknown sources, never trust these cables! They may have no ground connected, may have damage, may have been repaired by people without proper education in electrics, and without the proper tools – or these cable may just have suffered from abuse in an industrial environment. In a household, with low power, must not a problem. But here we are talking about larger currents, and these should not flow over cables with compromised integrity.

The same this time – in the plug, the ends of the cable were badly work, short protection sleeves used and overtightened. Mostly, just half of the wire intact.

At the oven end, the inlet to the control cabinet is a little tight – the earlier guy working on that cable didn’t even bother to fit the cable, just removed the isolation and put some tape… asking for trouble.

Luckily, I had a few meters of good cable around, and managed to fit it through, and now all is nice and safe!