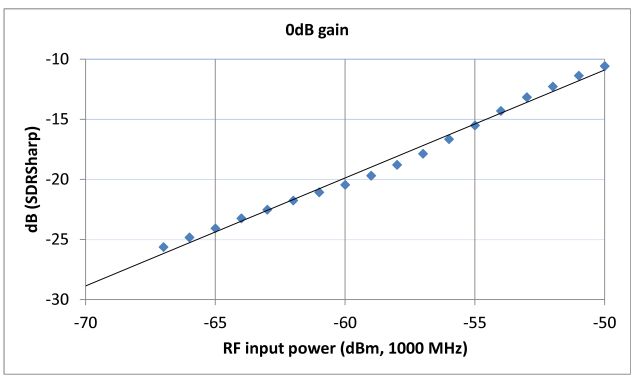

Some more linearity tests, now over a narrow range, with a precisely linear and calibrated source, measured at 1000 MHz, otherwise all the same is in the earlier post.

These tests were done at a 0 dB gain setting, and the input power was varied in 1 dBm steps.

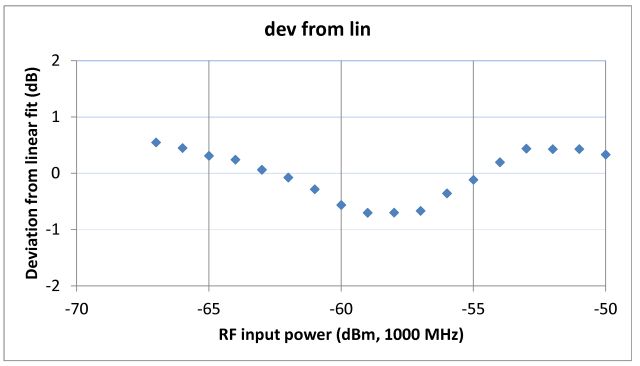

The results speak for themselves – the SDR USB stick is pretty accurate, if you want to do a relative comparison of power levels over a range of 10 dB or so. Accuracy might be in the range of +-0.5 dB, or better, provided that the slope has been determined for a given gain setting (don’t change the gain settings, if you need to do accurate measurements). If you want to measure insertion loss, the best way would be to vary the source power in calibrated steps (by a 1 dB high precision attenuator), and just use the SDR USB at a constant dB reading, over a narrow range of +-1 dB, then your measurement error will be limited to virtually the precision attentuator error only. For such tests, it is always good practice to have a 6 dB or 10 dB attenuator at the SDR USB input, to avoid artifacts caused by the non-negligible return loss (SWR) of the SDR USB stick input.

An open item – to go further, one would need to check linearity at various frequencies of interest, etc., but this is all beyond the scope of the SDR USB stick – if it comes to below 0.5 dB accuracy, that’s something better done in a cal lab anyway, nothing that is necessary too frequently out in the field.

For comparison, with a high precision dedicated level measurement receiver (like the Micro-Tel 1295), achievable relative level accuracy (not linearity) over 10 dB is about 0.03-0.05 dB – over a much wider frequency range. Also note that most commonly available spectrum analyzers (Rigol, etc.) aren’t all that linear, see their specs.