A time interval counter – this little device, based on an Atmel AVR ATMega32L assigns 64 bit time-stamps to events (event being a rising edge on INT1 interrupt), based on a 10 MHz OCXO, a Trimble 65256 10 MHz double oven oscillator. So, 100 ns resolution. The main purpose: precise monitoring of pendulum clocks – in combination with a temperature-air pressure-real time clock data logger.

Why TIC4 – well, there are several other (earlier designs), some with better resultion by interpolation (via a clock synchronizer and interpolation circuit). But for the given purpose, there is no need for any more than a few microseconds of resolution, because it is really hard to detect the zero-crossing of a mechnical pendulum to any better resolution.

For test purposes, I had the circuit running on a 16 MHz clock, with ordinary (not very precise or phase locked) 20 Hz, and 2 Hz signals at the input – running overnight to check for any glitches.

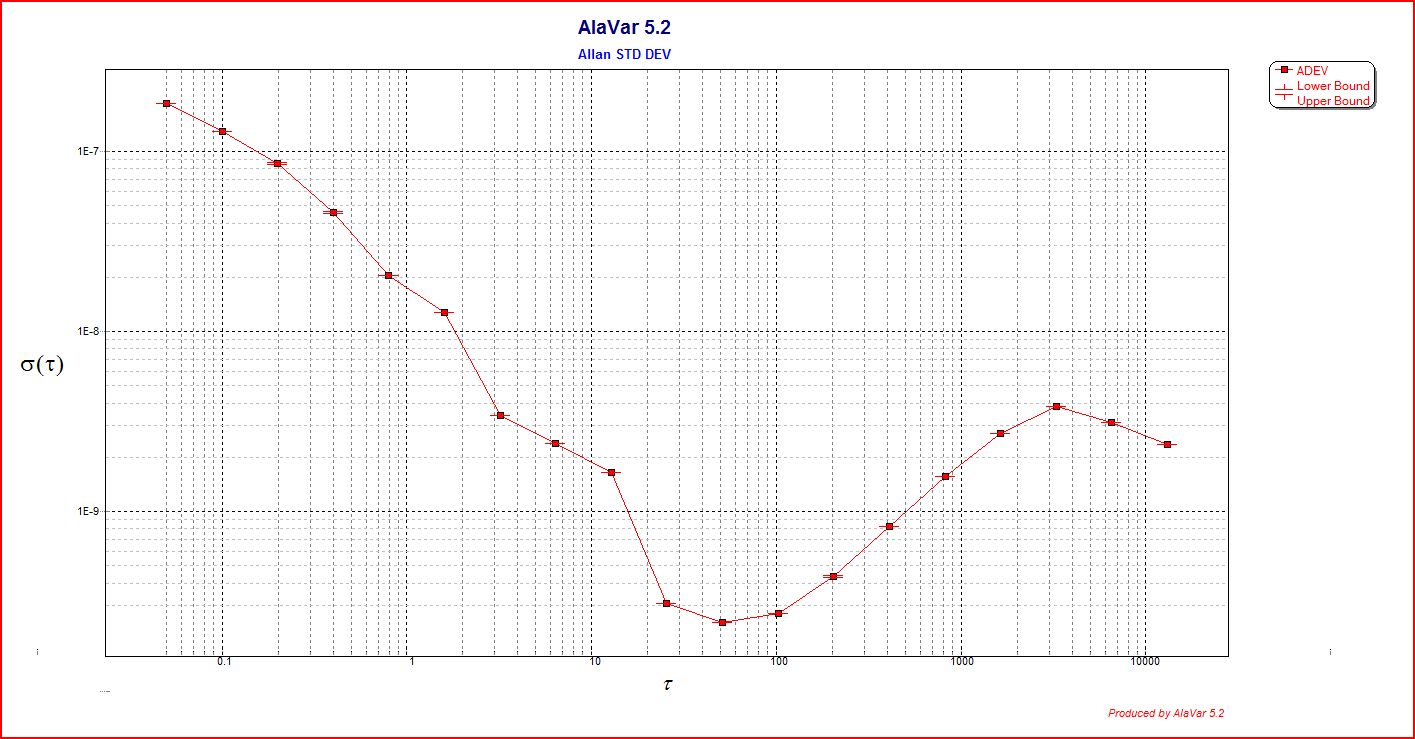

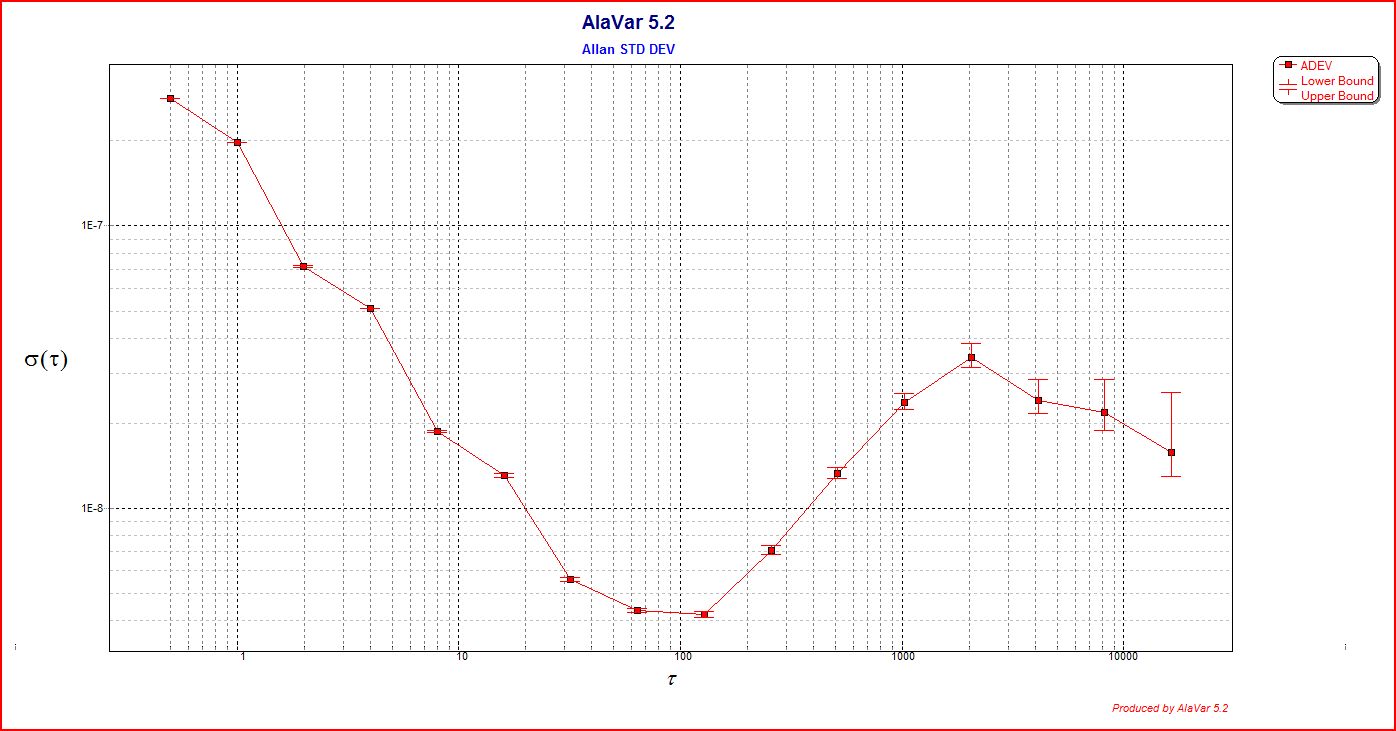

The Allen deviation plots show that for single events, the timing accuracy is about 150-200 ns, close to what is theoretically possible for a 16 MHz clock.

The AVR program code, it looks simple, but believe me, it isn’t. There are quite a few pitfalls, because for any timing of the interrupt, there needs to be a precise time-stamp generated, and transmitted to the host. Maximum time stamp rate is 100 Hz nominal (1 timestamp every 10 ms), but will work up to about 150 Hz, without missing any events. Timestamps are transmitted with every 16 bit timer overflow, chiefly, every about 6.6 ms (65535 x 100ns). Each timestamp and control info is 120 bit long (12 bytes, 8N1 protocol, 57600 baud) – 2.1 ms.

For test purposes, the serial data is sent to a PC, via a MAX3232 TTL to RS232 converter. Alavar is used to process the information into Allan deviation plots.

Test showed absolutely no glitch in about 1.3 million events – fair enough!

More details to follow.

One thought on “TIC4 Time Interval Counter: 64 bit timestamps – 100 ns resolution”